Before Kubernetes becomes powerful, it must first become predictable.

Predictability comes from understanding its core objects — the fundamental building blocks that everything else relies on.

Many engineers rush to Helm, Operators, or GitOps without mastering these basics. That usually leads to fragile clusters, hard-to-debug issues, and poor production reliability.

In this week, we will build Kubernetes from the ground up, starting from what Kubernetes really is, moving through each core object, and ending with enterprise-ready patterns.

What Kubernetes Really Is (Not What People Say)

At its core, Kubernetes is a distributed control system.

It does not:

- “Run containers magically”

- “Automatically make apps scalable”

- “Fix broken applications”

Instead, Kubernetes:

- Maintains desired state

- Continuously compares it to actual state

- Takes corrective action through controllers

Everything you deploy in Kubernetes is just data submitted to the API server.

The control plane reacts to that data.

Key idea:

Kubernetes does nothing unless you declare what you want.

What Problem Kubernetes Solves

Life Before Kubernetes

Before Kubernetes, teams:

- Ran applications directly on virtual machines

- Manually installed software

- Manually scaled instances

- Manually handled failures

This approach does not scale. Human-driven operations always break at scale.

What Kubernetes Introduces

Kubernetes introduces declarative infrastructure.

Instead of saying:

“Start this container now”

You say:

“I want 3 replicas of this application running at all times”

Kubernetes continuously works to make reality match your declaration.

This is the most important concept you must understand.

In contrast, Kubernetes provides:

- Declarative configuration — You define your desired state, and Kubernetes works to achieve and maintain it

- Self-healing — It restarts failed containers and replaces missing components

- Automatic scaling — It increases or decreases workloads based on demand

- Service discovery & routing — It provides networking and load balancing across services

- Workload portability — Your apps run consistently across clouds and environments

Kubernetes automates many tasks that used to be manual and error-prone — such as scaling, networking, and fault recovery — which is why organizations widely adopt it for distributed systems

Kubernetes offers a rich feature set that supports both stateless and stateful workloads:

- Automated rollouts, scaling, and rollbacks: Kubernetes continuously manages replicas and health states per your configuration.

- Service discovery and networking: It assigns DNS names and routes traffic reliably between services.

- Storage orchestration: It abstracts storage so persistent data can be used consistently.

- Declarative configuration: You submit YAML manifests that define your desired state, and Kubernetes makes it real.

- Cross-environment support: Works on clouds, edge environments, and local developer machines.

- Extensibility: You can add custom resources, controllers, and operators to meet advanced needs.

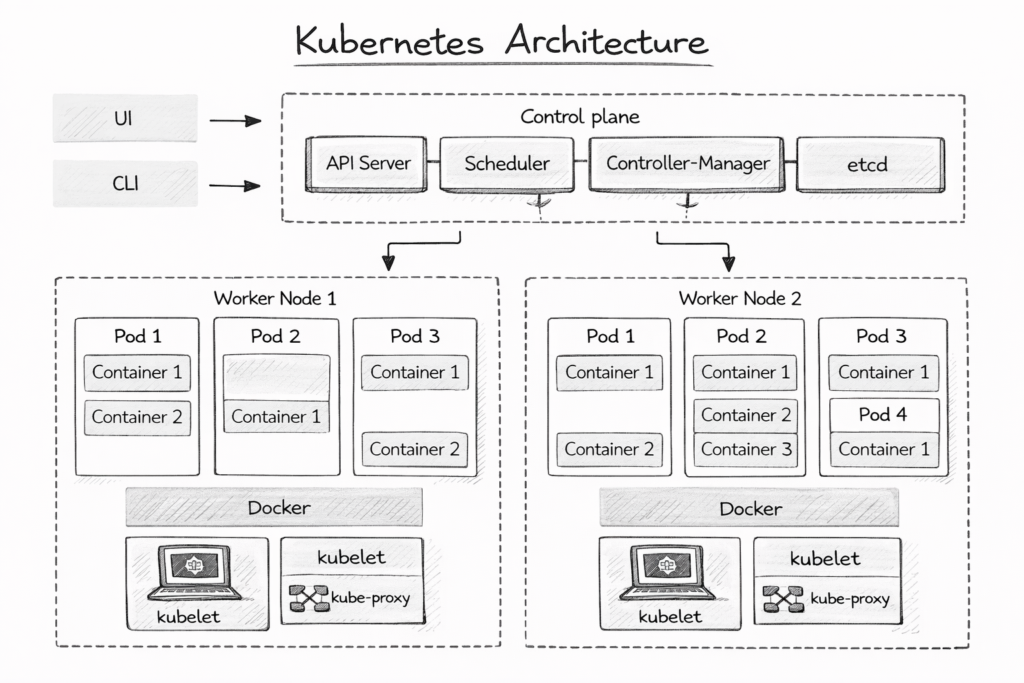

Kubernetes Architecture

Before we deploy anything, we must understand where Kubernetes logic lives.

Control Plane (The Brain)

The control plane is responsible for:

- Accepting requests

- Making decisions

- Maintaining desired state

It includes:

- API Server – Entry point for all commands

- Scheduler – Decides where Pods run

- Controller Manager – Runs control loops

- etcd – Stores cluster state

You never talk directly to nodes.

You always talk to the API Server.

Kubernetes responds to changes by:

- Accepting your desired specification via the API server

- Storing that intent in etcd

- Scheduling and reconciling resources repeatedly until the system matches your definition

This fundamental feedback loop (desired state vs. actual state) is what makes Kubernetes reliable.

Worker Nodes (The Muscle)

Worker nodes:

- Run containers

- Execute workloads

- Report status back to the control plane

Each node runs:

kube-proxy – Handles networking

kubelet – Talks to the API server

container runtime – Runs containers

🧱 The Kubernetes Object Model (Critical Concept)

Every Kubernetes resource is an object.

An object has:

- Metadata (name, namespace, labels)

- Spec (desired state — what you want)

- Status (actual state — what exists)

You never edit status.

Kubernetes owns it.

apiVersion: v1

kind: Pod

metadata:

name: example

spec:

containers:

- name: app

image: nginx

This simple YAML defines intent, not execution.

1️⃣ Pods — The Smallest Deployable Unit

🧠 Theory: What a Pod Actually Is

A Pod is not a container.

A Pod is:

- A logical wrapper around one or more containers

- A shared network namespace

- A shared storage context

Containers inside a Pod:

- Share the same IP address

- Communicate via

localhost - Start and stop together

This design exists to support tight coupling, such as:

- App + sidecar (logging, proxy, agent)

- Init containers

- Co-dependent processes

🛠 Practical: Your First Pod

apiVersion: v1

kind: Pod

metadata:

name: nginx-pod

spec:

containers:

- name: nginx

image: nginx:1.25

ports:

- containerPort: 80

Apply it:

kubectl apply -f pod.yaml

Inspect it:

kubectl get pods

kubectl describe pod nginx-pod

🔍 What Happens Internally

- YAML is sent to the API Server

- Scheduler assigns a node

- Kubelet pulls the image

- Containers start

- Status is updated

If the container crashes:

- Kubernetes restarts it

- If the node dies → Pod dies permanently

Enterprise rule:

Never deploy raw Pods in production.

Pods are ephemeral.

2️⃣ ReplicaSets — Keeping Pods Alive

🧠 Theory: Why Pods Are Not Enough

Pods:

- Are not rescheduled across nodes

- Are not automatically recreated if deleted manually

A ReplicaSet ensures:

- A fixed number of identical Pods

- Continuous reconciliation

Desired replicas ≠ actual replicas → Kubernetes fixes it.

🛠 Practical: ReplicaSet Example

apiVersion: apps/v1

kind: ReplicaSet

metadata:

name: nginx-rs

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.25

🔍 Enterprise Insight

ReplicaSets are rarely used directly.

They exist primarily as an implementation detail of Deployments.

3️⃣ Deployments — Your Primary Workload Object

🧠 Theory: Why Deployments Exist

Deployments solve real production problems:

- Rolling updates

- Rollbacks

- Versioned releases

- Zero-downtime changes

A Deployment manages:

- ReplicaSets

- Pod lifecycle

- Update strategies

🛠 Practical: Deployment Example

apiVersion: apps/v1

kind: Deployment

metadata:

name: web-deployment

spec:

replicas: 3

strategy:

type: RollingUpdate

selector:

matchLabels:

app: web

template:

metadata:

labels:

app: web

spec:

containers:

- name: web

image: nginx:1.25

ports:

- containerPort: 80

Apply:

kubectl apply -f deployment.yaml

Update image:

kubectl set image deployment/web-deployment web=nginx:1.26

Rollback:

kubectl rollout undo deployment/web-deployment

🔍 Enterprise Pattern

Deployments are ideal for:

- Stateless services

- APIs

- Frontends

- Background workers

They are version-controlled state machines.

4️⃣ Services — Stable Networking in a Dynamic World

🧠 Theory: Why Services Are Mandatory

Pods:

- Get new IPs when recreated

- Are ephemeral

A Service provides:

- Stable virtual IP (ClusterIP)

- DNS name

- Load balancing

🛠 Practical: ClusterIP Service

apiVersion: v1

kind: Service

metadata:

name: web-service

spec:

type: ClusterIP

selector:

app: web

ports:

- port: 80

targetPort: 80

Access via DNS:

curl http://web-service

Service Types (Enterprise View)

| Type | Use Case |

|---|---|

| ClusterIP | Internal services |

| NodePort | Debug / legacy |

| LoadBalancer | Cloud exposure |

| Headless | Stateful workloads |

5️⃣ Namespaces — Logical Isolation

🧠 Theory: Why Namespaces Matter

Namespaces provide:

- Resource isolation

- Access control boundaries

- Organizational structure

They are not security boundaries by default, but they are essential.

🛠 Practical: Namespace Example

kubectl create namespace prod

kubectl get ns

Deploy into it:

kubectl apply -f deployment.yaml -n prod

Enterprise Namespace Strategy

dev,staging,prod- Team-based namespaces

- Platform vs application separation

6️⃣ ConfigMaps — Configuration Without Rebuilds

🧠 Theory

ConfigMaps allow you to:

- Externalize configuration

- Avoid rebuilding images

- Change behavior without redeploying code

🛠 Practical Example

apiVersion: v1

kind: ConfigMap

metadata:

name: app-config

data:

ENV: production

LOG_LEVEL: info

Mount into Pod:

envFrom:

- configMapRef:

name: app-config

7️⃣ Secrets — Sensitive Data Handling

🧠 Theory

Secrets store:

- Passwords

- Tokens

- Certificates

They are:

- Base64 encoded (not encrypted by default)

- Protected by RBAC

🛠 Practical Example

kubectl create secret generic db-secret \

--from-literal=password=secret123

Use it:

env:

- name: DB_PASSWORD

valueFrom:

secretKeyRef:

name: db-secret

key: password

Enterprise Best Practice

- Enable encryption at rest

- Integrate external secret stores later (Week 5+)

8️⃣ Labels & Selectors — The Glue of Kubernetes

🧠 Theory

Labels:

- Are arbitrary key/value pairs

- Drive selection logic

- Power Services, Deployments, policies

Selectors match labels.

🛠 Practical

kubectl get pods -l app=web

Leave a Reply