Kubernetes Controllers are the core engine that keeps your cluster running reliably.

If you want to truly understand how Kubernetes works internally, you must understand controllers and the reconciliation loop.

In this guide, you will learn how Kubernetes Controllers maintain system state, enable self-healing, and automate operations — with real examples and hands-on labs designed for production-level understanding.

Who This Guide Is For

This guide is designed for:

- Beginners learning Kubernetes from scratch

- DevOps engineers preparing for real-world deployments

- Developers who want to understand Kubernetes internals

- Anyone confused about how Kubernetes self-healing actually works

How Kubernetes Actually “Thinks” and Self-Heals

This is where Kubernetes stops being just YAML and becomes a living system.

Week 1 gave you building blocks.

If you are new to Kubernetes, it’s important to first understand the building blocks before diving into controllers. You can read my detailed guide on Kubernetes Core Objects (Week 1) to build a strong foundation.

Week 2 explains how those blocks stay alive, recover, and stay consistent.

Why Week 2 Is Critical

Most engineers know:

- Pods

- Deployments

- Services

But they don’t understand:

Why Kubernetes keeps fixing things automatically

That logic comes from:

Controllers + Reconciliation Loops

If you understand this, you understand Kubernetes itself.

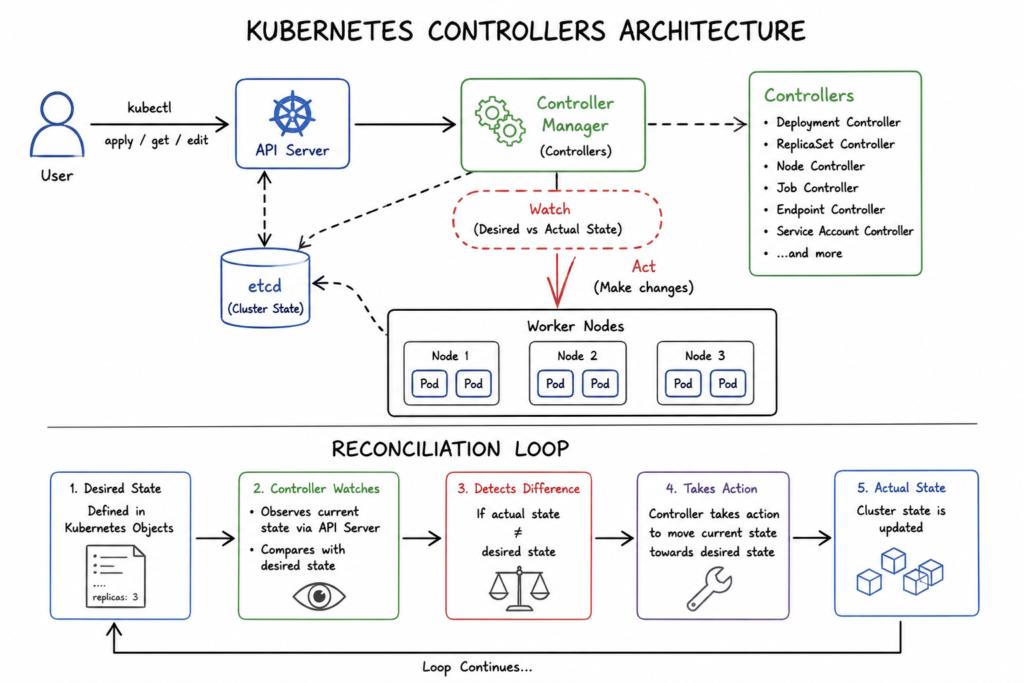

What Is a Controller? (Core Concept)

A controller is a loop that:

- Watches the cluster state

- Compares it with desired state

- Takes action if there is a mismatch

This runs continuously, not once.

Kubernetes controllers are part of the core architecture described in the official Kubernetes documentation.

You can explore how controllers work in detail in the Kubernetes official concepts guide.

In practice, controllers continuously monitor cluster state. Moreover, they compare desired and actual states. As a result, Kubernetes automatically corrects inconsistencies. Most importantly, this ensures system reliability.

The Reconciliation Loop (Most Important Concept)

Kubernetes operates on a simple loop:

Desired State → Actual State → Compare → Fix → RepeatExample:

You declare:

replicas: 3Reality:

Only 2 Pods runningController action:

Create 1 more PodThis loop never stops.

Real-World Analogy

Think of Kubernetes like a thermostat:

- You set temperature → desired state

- Room temperature changes → actual state

- System reacts → heater ON/OFF

Kubernetes does the same with applications.

Types of Controllers (Very Important)

Kubernetes has multiple controllers, each responsible for a resource.

1. Deployment Controller

Manages:

- ReplicaSets

- Rolling updates

- Rollbacks

2. ReplicaSet Controller

Ensures:

- Correct number of Pods running

3. Node Controller

Handles:

- Node health

- Pod eviction if node fails

4. Job Controller

Manages:

- One-time tasks (batch jobs)

5. CronJob Controller

Handles:

- Scheduled jobs (like cron)

How Kubernetes Controllers Work Internally (Deep Dive)

Kubernetes Controllers operate as continuous control loops inside the controller manager.

Each controller:

- Watches resources via the Kubernetes API Server

- Compares desired state stored in etcd with actual cluster state

- Takes action when differences are detected

For example:

- Deployment Controller ensures replica count

- Node Controller monitors node health

- Job Controller tracks task completion

These controllers do not run once. Instead, they continuously poll and react to changes.

Because of this design, Kubernetes achieves:

- Self-healing

- Automatic scaling

- State consistency

This is what makes Kubernetes reliable in distributed environments.

Practical Lab 1: See Reconciliation in Action

Step 1: Create Deployment

apiVersion: apps/v1

kind: Deployment

metadata:

name: demo-deployment

spec:

replicas: 3

selector:

matchLabels:

app: demo

template:

metadata:

labels:

app: demo

spec:

containers:

- name: nginx

image: nginxApply:

kubectl apply -f deployment.yamlStep 2: Verify Pods

kubectl get podsYou should see 3 Pods running

Step 3: Break the System (Important)

Delete one Pod:

kubectl delete pod <pod-name>Step 4: Observe Self-Healing

kubectl get podsKubernetes creates a new Pod automatically

What Happened Internally

- Deployment → desired = 3 Pods

- Actual = 2 Pods

- Controller detects mismatch

- New Pod created

Practical Lab 2: Scale the System

Step 1: Scale Deployment

kubectl scale deployment demo-deployment --replicas=5Step 2: Observe

kubectl get podsNow 5 Pods running

Step 3: Reduce Scale

kubectl scale deployment demo-deployment --replicas=2Kubernetes deletes extra Pods

Rolling Updates (Zero Downtime)

Controllers also manage updates safely.

Step 1: Update Image

kubectl set image deployment/demo-deployment nginx=nginx:1.26Step 2: Watch Rollout

kubectl rollout status deployment/demo-deploymentStep 3: Check History

kubectl rollout history deployment/demo-deploymentStep 4: Rollback

kubectl rollout undo deployment/demo-deploymentWhat Happens Internally

- New ReplicaSet created

- Pods updated gradually

- Old Pods removed slowly

- No downtime

Advanced Concept: Desired vs Actual State

Every controller compares:

| State Type | Meaning |

|---|---|

| Desired State | What you define in YAML |

| Actual State | What exists in cluster |

| Drift | Difference between both |

Controllers eliminate drift continuously.

Important Insight (Enterprise Level)

Kubernetes is not event-driven

It is state-driven

That means:

- It doesn’t react once

- It constantly ensures correctness

Enterprise-Level Patterns

Pattern 1: Immutable Infrastructure

Never modify running Pods manually

Always update via Deployment

Pattern 2: Declarative Everything

Always use:

kubectl apply -fAvoid:

kubectl runPattern 3: Git as Source of Truth (Preview of Week 5)

Controllers + Git = GitOps

Common Mistakes (Avoid These)

Manually editing Pods

Deleting Pods expecting system to break

Using kubectl imperatively in production

Ignoring rollout status

Practical Lab 3: Simulate Node Failure

(optional if local cluster)

kubectl drain <node-name>Pods get rescheduled automatically

Controllers = Automation Engine

Without controllers:

- No scaling

- No recovery

- No updates

- No reliability

Controllers are the core intelligence layer of Kubernetes

Week 2 Outcome

After this week, you understand:

✔ How Kubernetes maintains state

✔ Why self-healing works

✔ How scaling happens

✔ How rolling updates work

✔ How controllers enforce reliability

FAQs

What are Kubernetes Controllers?

Kubernetes Controllers are control loops that continuously monitor cluster state and ensure it matches the desired configuration.

What is the reconciliation loop?

The reconciliation loop is the process where Kubernetes compares desired state with actual state and corrects differences automatically.

Why is Kubernetes self-healing?

Kubernetes is self-healing because controllers automatically recreate failed Pods and maintain system stability.

Are controllers always running?

Yes, controllers continuously monitor and adjust the system state in real time.

Leave a Reply